|

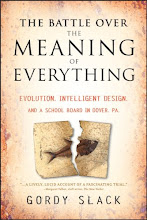

| Christopher Walken in dream recording machine from the film Brainstorm (1983) |

“This is a major leap toward reconstructing internal imagery,” said Professor Gallant, in a press release about the research. “We are opening a window into the movies in our minds.”

The demonstration, published in the Sept. 2 issue of the journal Current Biology, went like this: Subjects lay in fMRI machines that recorded the brain activity in their visual cortex while they watched film clips. Gallant took those "recordings" and used algorithm driven computer searches to "translate" those recordings into other, similar images taken randomly off of YouTube. The YouTube clips were a kind of image palate from which the original footage was reconstructed. Several reconstructions from different subjects were averaged together to get a blurry, dream-like video sequence that roughly, and eerily correspondes to the original video. You can see the result in this dyptic, which shows the video that the subjects watched, on the left, and the reconstruction from their brain scans on the right.

The Gallant group is reading from the relatively straightforward visual cortex. Trying to interpret "thought" patterns in the cerebral cortex or distributed processes like memory and emotion would be much, much more complex. Machines that could make meaningful reproductions of thoughts, emotions, or memories may be decades off, but they are coming. And we probably aren't ready for the ethical, legal, and emotional challenges (and opportunities) that will accompany that kind of tech. Or maybe we are: we already create representations of our internal lives all the time by doing art and having conversations. In fact, aren't the original film clips already more-or-less high-fi representations of the minds of their original directors mediated through another technology: film?

1 comment:

Brainstorm! Hey, I loved that movie when I was a kid! Nice reference....

Post a Comment